The ABB Eyecatcher

Combining gesture recognition with eye tracking, we are able to prototype the future of interaction in an industrial control room environment.

The brief for this project was: what might human-machine-interaction in the processing industry look like in 5 to 10 years? How will an operator of the future interact with a multi-screen remote operations environment? Are there alternatives to the traditional screen, keyboard and mouse?

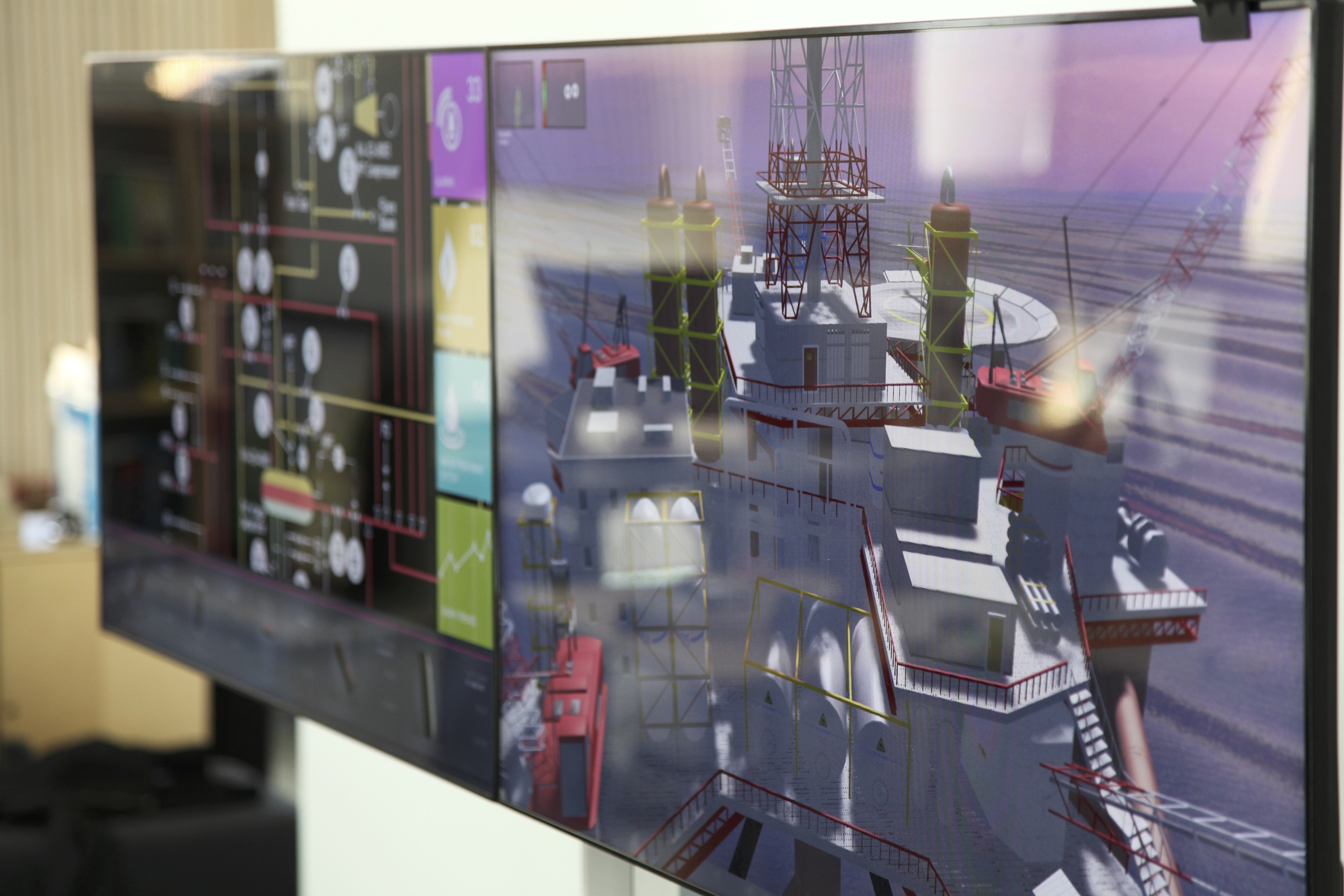

In this fully functional prototype, we combine the use of gesture recognition and eye tracking to allow the users to interact, using physical gestures, with whatever they are looking at. A Microsoft Kinect is used to sense the users' gestures. The technology uses a 3D camera that detects 3D gestures and makes it possible to steer objects on the screen. Tobii Technology's eye tracking technology enable users to interact with computers using their eye gaze.

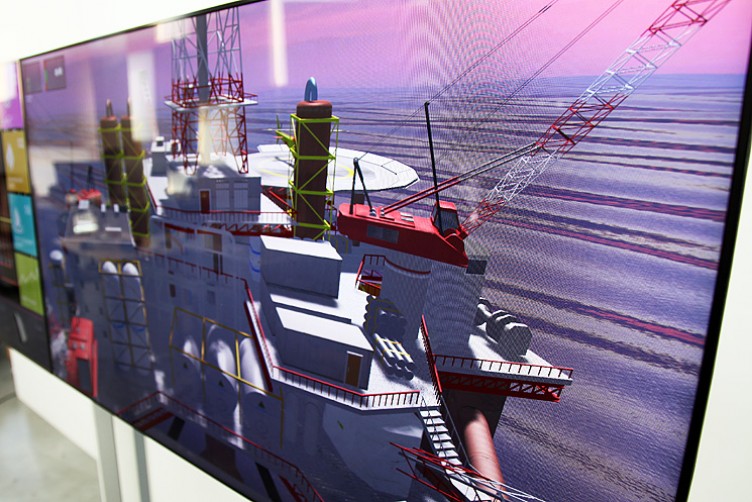

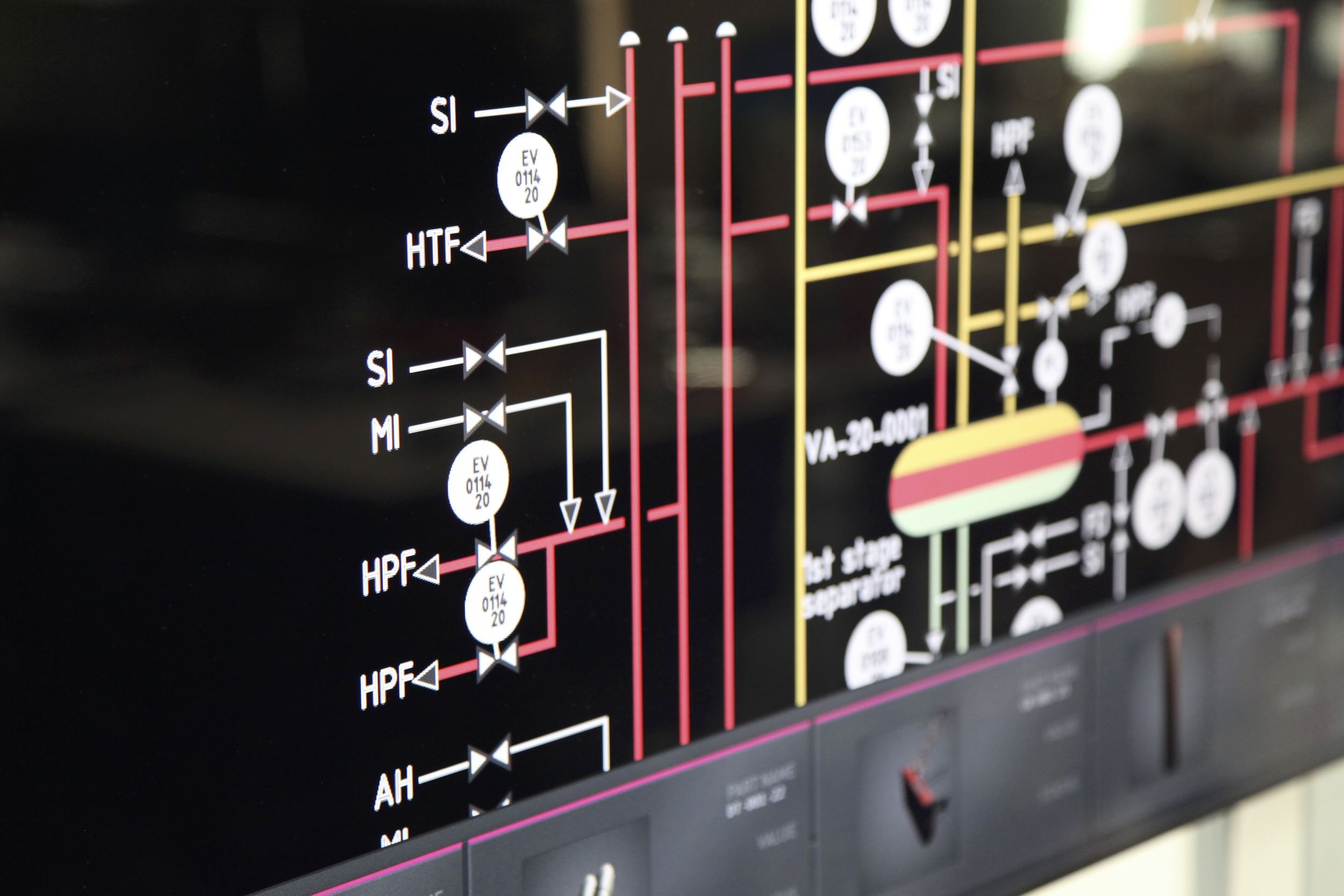

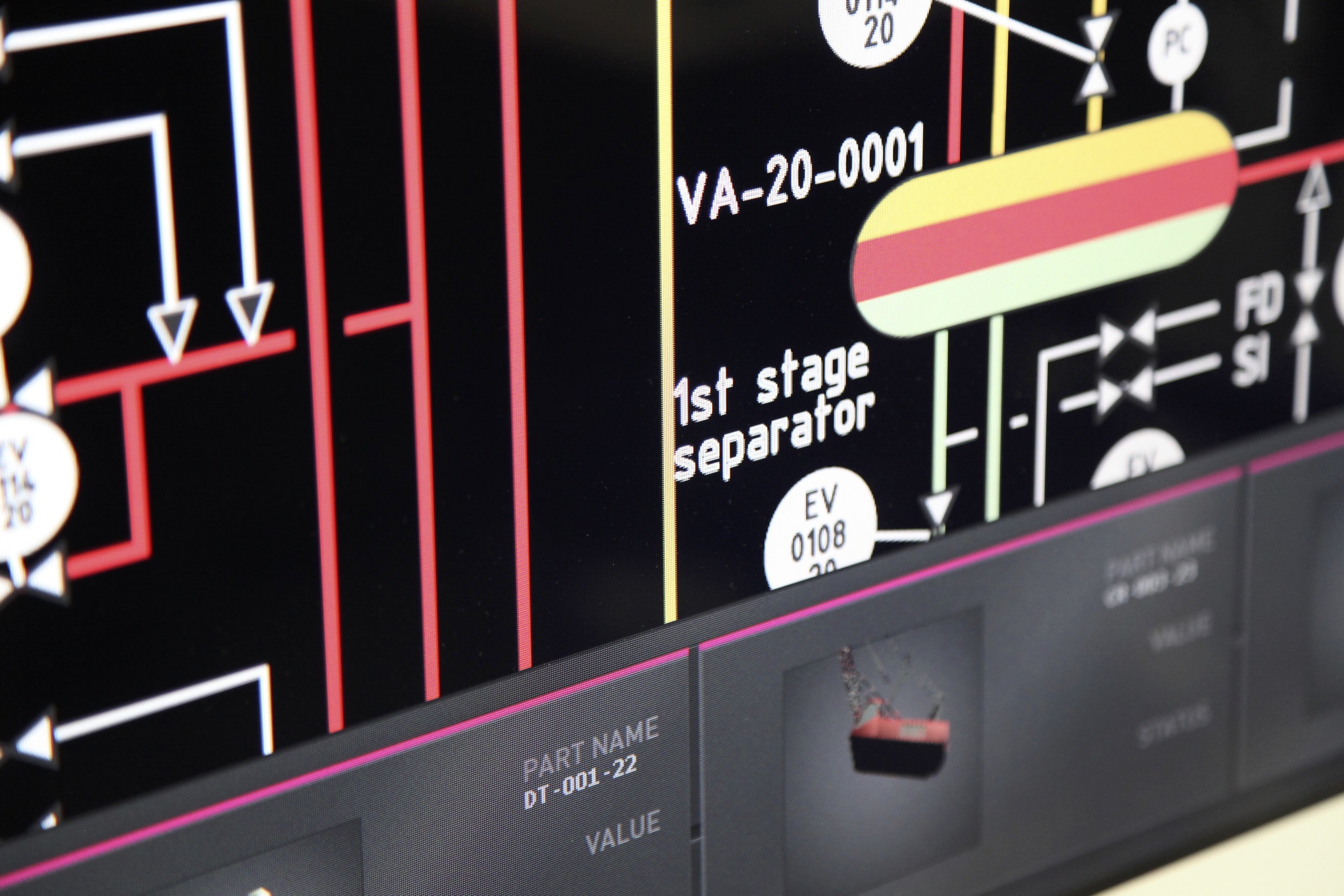

The prototype consists of two flat screens, placed a couple of meters in front of the operator. On the right-hand screen, a 3D representation of an oil rig is shown. By swiping vertically with the arm, the operator can navigate through different levels of the oil rig model. Instead of using a mouse to highlight objects and make them clickable on the screen, the operator uses his or her eyes. When an object is selected, it is moved by a swipe gesture to the screen on the left. Here, in the process view, the operator can interact more in detail with a specific object, e.g. a compressor, and see how it performs. Via the eye tracking, menu items can also be expanded to reveal more information.

About this Project

This project was carried out during 2012 at Interactive Institute Swedish ICT for our client ABB Corporate Research.

Design Team

- Daniel Fallman

- Björn Yttergren

- Ru Zarin

- Kent Lindberg

- Ambra Trotto

- Fredrik Nilbrink